Today we're re-launching Fitabase Engage, our unified platform for designing and deploying real-time, interactive research experiences across mobile and wearable devices. It's our most ambitious product release since the core platform I created 14 years ago. And it feels fitting to introduce it where Fitabase began, at the annual Society of Behavioral Medicine conference in Chicago.

This has been a long time coming. About six years ago, we began prototyping wrist-based research experiences on the Fitbit OS smartwatch platform. Those early efforts required significant customization and developer time, which meant we could only support a handful of studies each year. But that work was invaluable. We learned how participants respond to in-the-moment prompts, how researchers think about study design, and what it takes to make these experiences feel natural and reliable.

While Google has continued to invest in Fitbit's core device category, WearOS replaced FitbitOS, and it became clear we needed a new smartwatch platform that could support both iOS and Android. Without that, we turned our focus to mobile, which opened up new possibilities. In particular, we saw how wearable data could power more responsive EMA and JITAI interventions on the phone. At the same time, much of the backend work we had already invested in carried forward.

In 2025, an important update to the Garmin ConnectIQ platform created new opportunities on the wearable side. We were finally able to support the kinds of real-time prompting, data transfer, and user experiences we had been working toward, on devices that work across both iOS and Android.

What emerged from all of this is Fitabase Engage. It brings together mobile experiences, wearable integrations, light SMS interactions, and backend infrastructure into a single, cohesive platform.

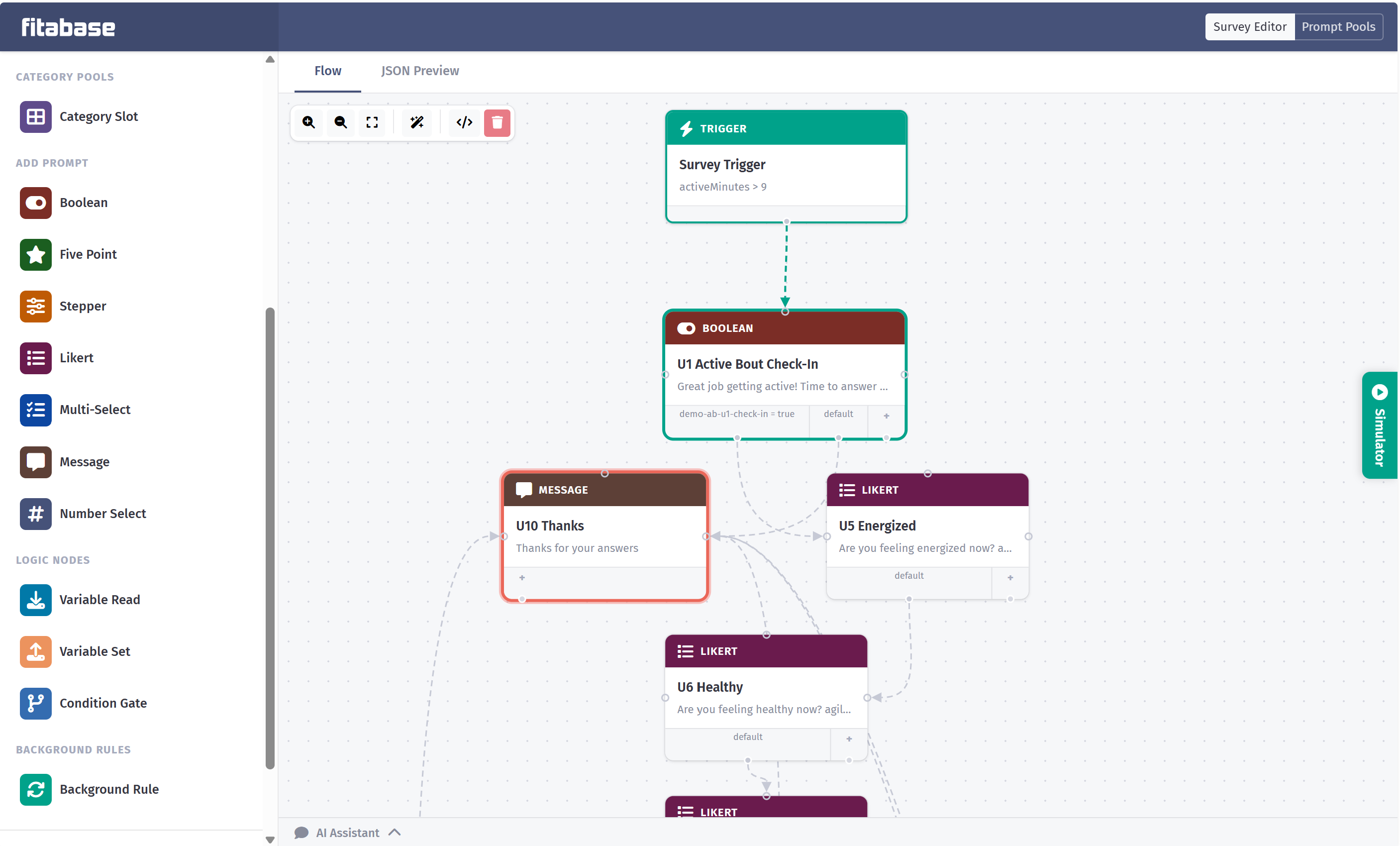

At the core is a new visual planning environment where you can design participant interactions using branching logic, conditional triggers, variables, and randomization. These flows can respond to real-time data, such as triggering a prompt when activity levels hit customizable values or adapting questions based on prior responses. The goal is to give research teams a flexible but intuitive way to build and iterate on study designs.

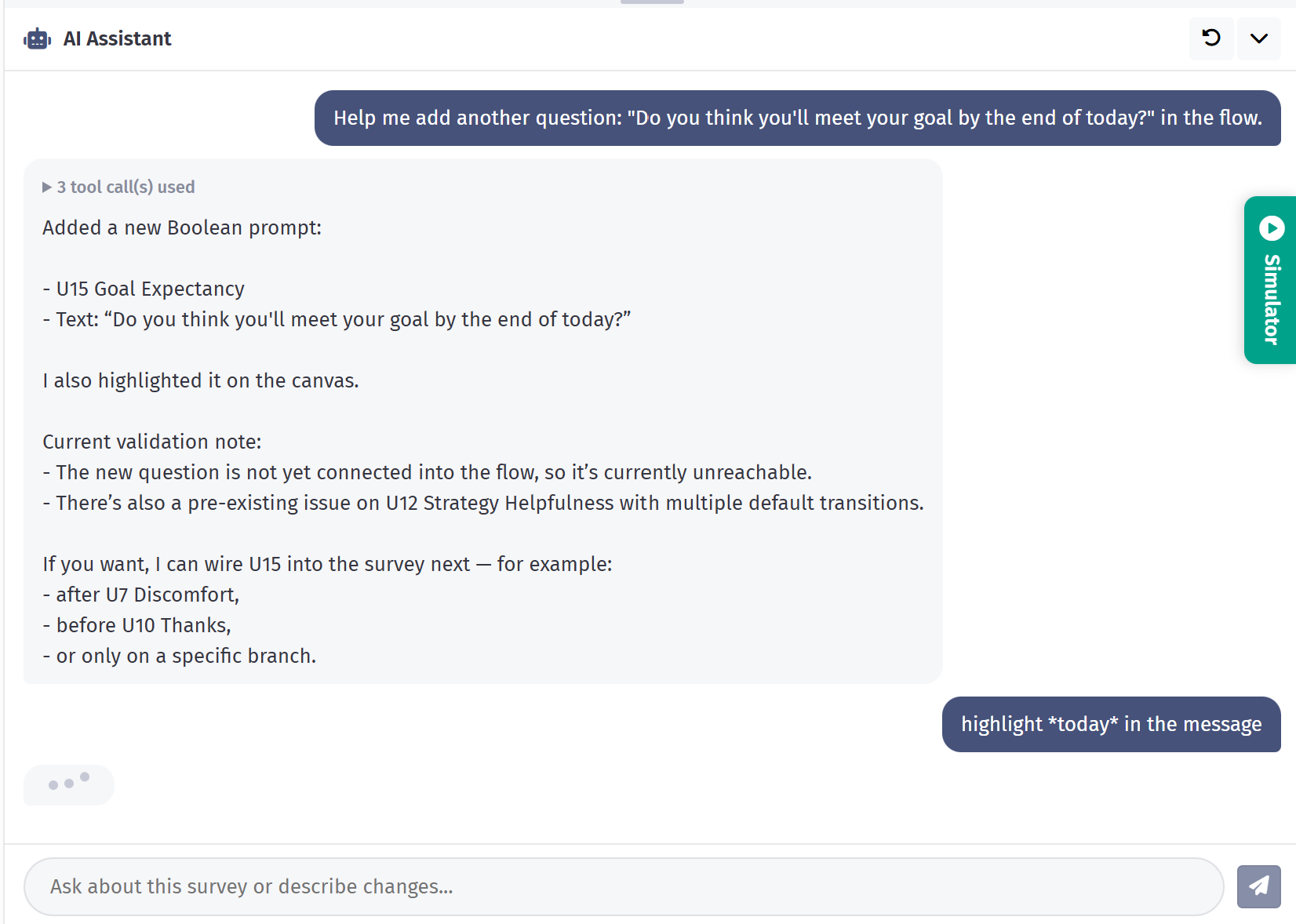

We have also been exploring how AI can make this process easier. One of my favorite features is the AI Assistant, which helps translate plain-language study descriptions into structured interaction flows. In our testing, teams can start with written protocols and quickly generate working diagrams with just a few clarifying inputs.

I am excited for you to see what we have built and how it can support your work. Please share your feedback and ideas as you explore it.

And if you are at SBM in Chicago, stop by our booth during the poster sessions to get a hands-on look at the new designer.